In German folklore, Faust trades his soul for knowledge. Even if we value knowledge highly we might wonder if this was such a good idea1.

Soulful considerations aside, is a Faustian bargain even the best way to get knowledge from the devil anyway? In the 1500s the Catholic Church suggested an alternative. The Promoter of the Faith was a person who would promote the vices, not virtues, of a potential saint. The idea was to increase the clergy’s confidence in the deservingness of sainthood after hearing both views - both for and against the canonisation of a would-be saint2.

Often, conversation is for social benefits (establishing common ground, making each other laugh etc). However, one other goal of conversation that we sometimes pursue is knowledge-seeking.

In On Compromise, we defined a concave problem as one in which the optimal solution lies between two extreme solutions. I wrote:

A person in a knowledge-seeking community when faced with a concave problem “should [for an optimal solution] be in community, acting as individuals in a collective, as neurons are to a brain. The emergent system, not the constituent nodes, may have a higher wisdom that is found only in the coming together of people and ideas.

Several social institutions are based on this principle. When we anticipate compromise to be effective we tend to develop adversarial systems (political parties, legal systems etc.) designed to converge optimally in concave environments.”

Examples of adversarial systems include:

👩⚖️ Legal systems: prosecution and the defence presenting their sides of the case to get to the verdict

💸 Price discovery in a marketplace: a stock market with participants forecasting the level of success of a public business

🤖 In Machine Learning, Generative Adversarial Networks (GANs): a structure where one AI evaluates the ‘realness’ of data (images say) generated by another AI that is trying to fool it into thinking the images are not artificial3.

More generally an Adversarial System is where two parties pursue opposing instrumental aims toward a shared end goal. For example, the defence tries to acquit the innocent and the prosecution tries to punish the guilty, towards the ultimate joint goal4 of achieving justice.

Adversarial Systems are defined by a three-part epistemic dance: the dialectal waltz of thesis, antithesis, and then, synthesis.

1. The Adversarial Approach

Why do we need Adversaries? We want two things which seem compatible but are actually in conflict:

We want to hold as many true beliefs as possible (because true beliefs are useful to act upon),

and simultaneously

We want to hold as few false beliefs as possible (because false beliefs are generally detrimental if you act on them)5.

This is the core tension at the heart of the Knowledge Project67. If we just wanted to hold true beliefs we would simply throw every vaguely plausible proposition we came across into our belief box. And if we just wanted to avoid false beliefs then we would instead refuse to put anything into our belief box at all - we would be forever suspending judgement for even seemingly obvious claims8.

One solution to this problem is to have two processes running in parallel - one trying to gain truths and the other trying to avoid falsehoods. We could then fill our belief box by considering both processes together. Wait a damn minute - this is precisely an Adversarial System! You might recognise this simple solution if you squint: if two people run one of these processes each, it looks rather like the Socratic Method9. Maybe Socrates had the solution all along.

True Positives & False Negatives

Truth is Like A Box Of Lateral Flow Tests, You Never Know What You’re Going To Get - Anon

Trigger Warning: Allusions to the dark days of the pandemic

Throughout the Covid Pandemic, I’m not sure if I got the virus. There was one point where I thought that I might have but the test I took came back negative. Was I Covid clear?

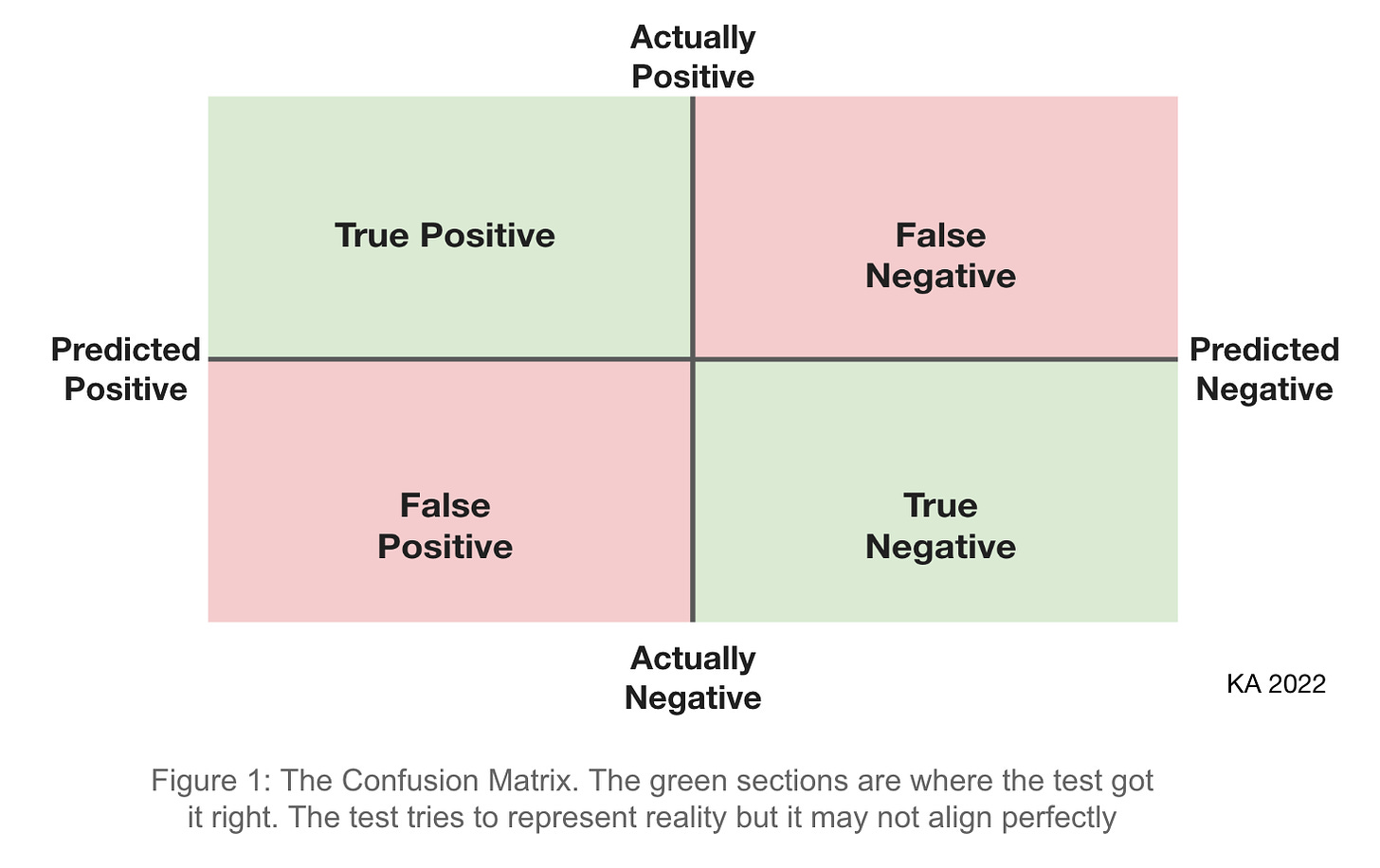

An accurate test is one which shows positive when you have the virus and negative otherwise. There are two possible failure modes. The test can say you’ve got the virus when you haven’t (a false positive) or the opposite (a false negative).

Similarly, a proposition generally either corresponds with reality or doesn't (or is nonsense/fuzzy). And we can think of belief as analogous to predicting the truth of the proposition. Here then, the Knowledge Project is aiming to create an accurate ‘map’ (or representation) of reality: one that corresponds to the ‘territory’ of reality as closely as possible10.

Useful Knowledge & Strong Beliefs

Let’s consider two types of knowledge: useless knowledge and knowledge that is (at least potentially) useful. We may question whether the first type is knowledge at all but, even allowing it as knowledge, useless knowledge by definition can never change our actions.

An example of useful knowledge is knowing that an oven can cook our food - this allows us to use the oven to get something that we want, namely nourishment. Useless knowledge, on the other hand, would be in knowing how many blades of grass there are in Central Park - it is unlikely that knowing this information would change our behaviour in any way11.

We might call a belief Strong if you are willing to act based on it (perhaps within some situational context) and Weak otherwise or if you are suspending judgement12. Note that this implies that only Strong beliefs can make up useful knowledge and so are the only beliefs that are (instrumentally) good for us13.

One helpful framework for good-faith adversaries is Strong Beliefs, Weakly Held. It’s an encouragement to have beliefs that you’re willing to take a stand for (Strong Beliefs) but simultaneously to not be too entrenched in your position. In the light of new evidence, you remain able to change your belief (Weakly Held14).

Artist Simone Breton, of the Parisian Surrealism scene, said that her collaborative pursuits allowed her to produce 'images unimaginable by one mind alone’. We might say that epistemic (rather than artistic) collaboration grants us ideas unimaginable by one mind alone, it moves us towards truth that we wouldn’t have otherwise reached15.

This is the lofty promise of collaboration. Can it deliver?

2. Conditions for the Effectiveness of the Adversarial Approach

“Okay okay, this all sounds interesting but have you ever actually had a conversation before?” This would be a fair comment. People rarely change their minds. When is reaching knowledge via an adversarial approach actually achievable? There are considerations for both the situational context and the people involved.

Situation Conditions

First, as we noted earlier, for an effective adversarial system the situation should be concave (in the sense above).16

Similarly, we should not set up an adversarial system when all sides agree too closely. There is little to gain when the beliefs are almost identical.

Thirdly, all sides should have the chance to come to a considered Strong Belief before the collaboration. In Noise, Kahneman writes that “independence is a prerequisite for the wisdom of crowds”17. Belief formation in private helps avoid simply conforming to the majority18 taste. Without this, the ‘wisdom of crowds’ can devolve into the ‘madness of crowds’: groupthink and mob rule.

Fourthly, the interlocutors should, if possible, have Skin In The Game. Since Strong Belief requires the willingness to act on the belief, already having an actionable interest in the claim is a good way to validate this willingness and to stop bad-faith weak-belief talk.

Person Conditions

The possible reasons that generally thoughtful people in good faith19 might not come to an agreement seem to be:

A deep philosophical disagreement - a fundamental difference in ideology

A lack of common knowledge - we cannot generally expect people to agree without the relevant information in common20. This indicates the imperative for information sharing and good explanations

An intelligence barrier21 (extraordinarily rare)

Beliefs functioning as identity - people who are less committed to their belief as an identity are more painlessly able to change their view (i.e. to practise ‘Weakly Held’).

One practical way to achieve these Person Conditions is to create social incentives and norms for intellectual virtues. This transforms the Person Conditions into an additional Situation Condition at the community level22.

So in summary an adversarial approach is generally a Very Bad Idea when you have a convex situation with an ideologically homogeneous (or polarised) group who lack relevant knowledge and who see little downside if they are wrong.

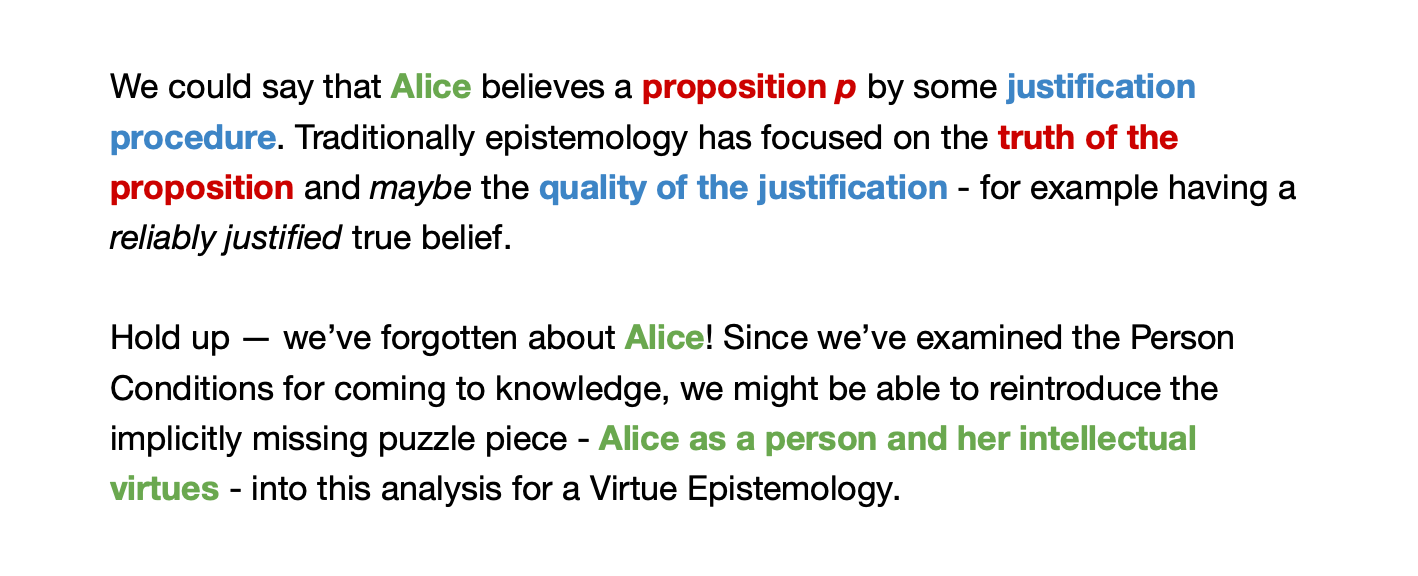

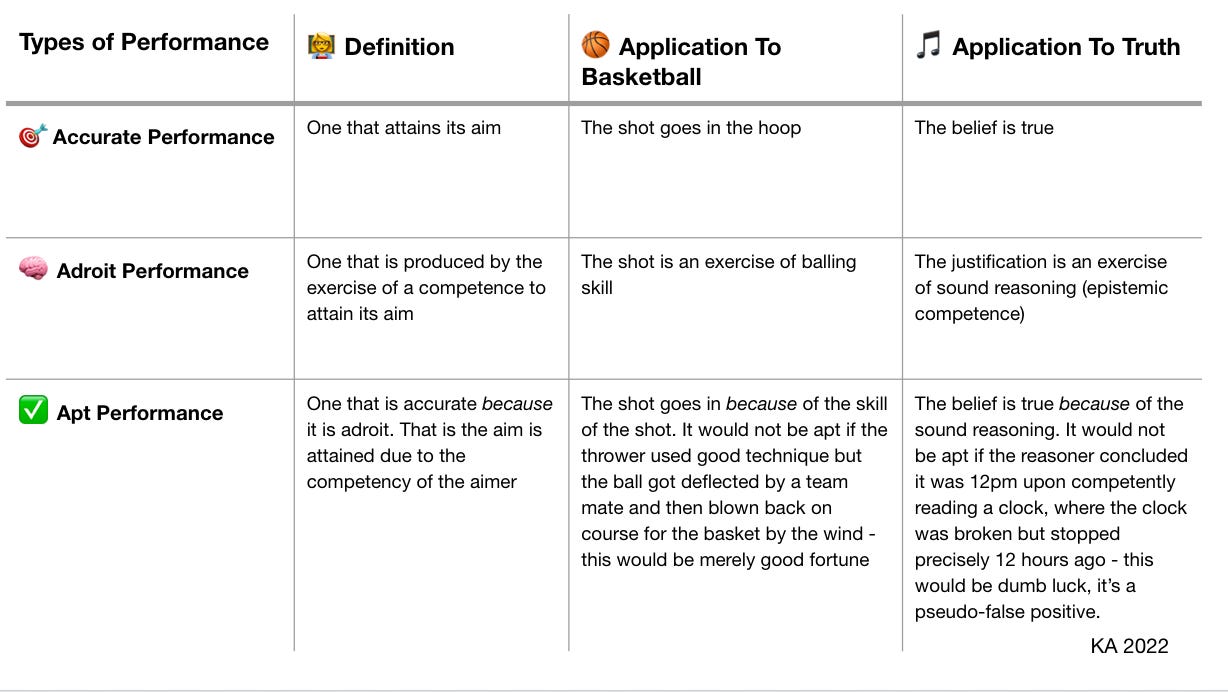

3. A Virtue Theory of Knowledge

Philosopher Ernest Sosa24 conceives of (first-order) knowledge as a performance with an aim that can be evaluated on three dimensions: accuracy (in the Covid test sense above), adroitness and aptness. For Sosa beliefs become knowledge when they are apt (in the sense below).

The key here is that we see adroitness as competency derived from the intellectual virtues of the person25. Perhaps we value curiosity, humility, earnestness, courage etc as such virtues.

Sosa calls this first stage, knowledge as apt judgement, ‘Animal Knowledge’. Further, for Alice to have Human, ‘Reflective Knowledge’ of some proposition p we require her to aptly believe that she aptly believes p (second-order knowledge)26. That is, Alice makes a judgement on her first-order belief where this judgement is true because her (second-order) reasoning is reliable.

We can think of this second-order ‘Human’ knowledge as Alice being able to evaluate the process with which she came to the process of coming to p. Equivalently, that is to say, that Alice can successfully justify her belief against sceptical challenges of a perfect epistemic agent. Ring all the bells and call us Mamma Mia 2 because here we go again: in other words, the purest Reflective Knowledge is exactly ‘being able to engage in an ideal Adversarial Collaboration and come out still aptly believing p’!27 In other words, ideal adversarial collaborations are the litmus test for Reflective Human Knowledge.

4. Adversaries as Metaphors We Live (and Die) By

To name things wrongly is to add to the misfortune of the world - Albert Camus

There’s a lurking issue in our current framing of the Adversarial Approach. In 1980, cognitive linguists George Lakoff and Mark Johnson dropped their seminal text Metaphors We Live By outlining how the connotations of language that we use unconsciously creeps into the way we think and act.

For example we often use the metaphor of time as money in saying things like “How do you spend your time?” or “That flat tire cost me an hour”. Without realising it, our mental structures start to see time as a valuable commodity via our ideas about money. Western cultures, where this metaphor is more ingrained, are more likely to favour trading time for money in payment per hour.

Similarly we might realise that the connotations that the word ‘adversarial’ has are mostly aggressive and confrontational. This might be a bad thing for a project for Adversarial Collaborations!

Yikes. In light of this, ‘Adversarial Collaborations’ are at best the right idea but with a branding problem. And if we believe Lakoff & Johnson then a branding problem can become a material problem. Reframing the idea of Adversarial Collaboration as ‘Epistemic Social Convergence’ (or similar28) might allow us to move from a competitive, semi-violent metaphor to one of harmonious cooperation.

5. Agreeing to Collaborate, Collaborating to Agree

Collaboration is not a Faustian bargain for epistemic payoff but instead, a way of being that supports better thinking in community: Epistemic Social Convergence.

It’s criticism not in the sense of an unhappy complainer, but in the sense of an art critic. It’s positive-sum, it’s appraisal, it’s a desire to align closer with reality. Here critique is not a dirty word. Thoughtful, good-faith critique is collaboration. Optimistically this is a vision for collaboration as a move toward apt judgement: knowledge that is Reflective and above all, Human.

This essay was originally written to share with a group of friends writing together. I leave in the postscript for completeness.

Epilogue: Essay Clubs as Epistemic Collaborators

Might we conceive of an essay club as a community for Epistemic Social Convergence?

In the early computing age, mathematician Richard Hamming, wrote the once-revelatory 'The Purpose of Computers is Insight, not Numbers'. Now we may update this by saying that the purpose of computers isn’t Numbers, nor even only Insight; there’s another purpose - Community. In our networked age, social media and instant communication are primary uses of the internet. We understand ourselves more fully in relation to the other; the most enlightened self is the self inside a collective whole.

Similarly, in a writing club, there’s great value in essays because they (at least aspirationally) offer Useful Knowledge to act on. But there’s additional value in Insights through Community: it’s higher knowledge as a group, it’s crowdsourced confidence for Strong Beliefs, it’s friendships providing context for positive social norms for developing Intellectual Virtue, it’s approaching ideas unimaginable by one mind alone.

Here’s to thriving collaborative Epistemic Social Convergence. I can’t wait for you to critique me.

In Mark’s gospel Jesus asks rhetorically ‘What will it profit a man if he gains the whole world, and loses his soul?’

Mark 8:36 NKJV

Indeed Faustian bargains are tragic exactly because what is given up is to be later realised as more valuable than what was gotten.

This practice is sometimes referred to as being the 'Devil's Advocate': I'm refraining from using this terminology because of its modern connotations and, as will become apparent, I don't generally believe that arguing in bad faith for a position that you don't actually hold is effective or kind.

Since 1983 the Promoter of the Faith has been no more and as a result the decision to canonise is essentially entirely down to the contemporary Pope. This has resulted in canonisations being more frequent, quicker after the death of an individual and more sensational - none of which speak to it being a more rigorous practise.

If you like Meta-science:

A take on Daniel Kahnemann’s Adversial Collaboration methodolgy for Social Science, is having its first medium scale academic trial at UPenn headed by Phil Tetlock & Cory Clark from July 2022.

https://penntoday.upenn.edu/news/pursuit-scientific-truth-adversarial-collaboration-Tetlock-Clark

It's possibly early to say but perhaps this approach has the potential improve the methods of science (even beyond the potential of the Open Science revolution of publishing data to reduce p-hacking and non-replicating psychology studies).

A legal system is not under pressure because the two sides have different positions; it’s strengthened. The system is better than the sum of its parts - in the same way that the structure of an all star team can make it more than simply a collection of ‘all star players’.

Also note that simply having lots of true beliefs is not knowledge, you have to know that you know and this requires reducing and correcting error.

Without this you dilute your true beliefs with false ones.

If You Like (IYL) Epistemology:

For now, we can simply consider knowledge as approximately "justified, true belief" (JTB), though the argument generalises beyond this (naive) conception of knowledge (attributed to Plato's Meno).

In particular one property of knowledge that we will use is that a statement that you can have knowledge of is necessarily one that is true. That is you can't know something false. (We will later present an alternative view to JTB.)

William James elucidates this tension in 'The Will To Believe' excellently. His partial solution to this problem is epistemic pragmatism.

We are interested in this argument but our solution will differ slightly as we proceed.

Also thanks to Agnes Callard’s work on knowing.

After all how confident are you that if you throw a ball up in the air, it will come back down again?

Probably pretty confident but not 100%: you probably have some (negligible but real) doubt in the laws of physics as we understand them today and some associated doubt in the validity of Inductive Reasoning.

If you Like Adam Smith:

We might consider this as an adversarial division of epistemic labour.

This would indeed point to why it would be a good idea to split up these roles between multiple people, rather than demand that each person performs both tasks themselves (though it is of course possible for a person to try to play both sides). In splitting up the roles we get the benefits of the specialisation of labour.

We might say that sceptics care a lot about reducing false positives while naive or ‘woo’ people care a lot about never missing out on a true positive, risking false positives on the way as collateral.

Where we should fall along that scale (i.e. the golden mean in the sense of On Compromise) is an open question but, if knowledge is of instrumental value, then it is at least an empirical question. We choose the position (perhaps contextually dependent) that maximises our intrinsic value.

As it turns out given an acceptable False Positive Rate there's some helpful maths that will let us know what credence we require in a belief in order to let it into our belief box. Though this kind of solution is great for AI algorithms, it doesn't really generalise perfectly to humans, since we are not as precise generally. Nonetheless, theoretical knowledge of such a procedure can give us a useful framework for understanding our belief systems.

We might note here that usefulness is initially a relational property. Something is useful forsome purpose or toward some aim. The aim might be flourishing or it might be something more proximate.

But it is certainly true that some things are more useful than others in general: an iPhone is more useful than a single speck of dust in almost all situations, no matter the (reasonable) aim. So it ispossible for us to talk informally about usefulness as a property without being explicit about the aim. And it is additionally possible to talk about usefulness being a good thing, insofar as reasonable aims are good.

This generalises to knowledge.

We might say that we don’t want beliefs we can stand by in times of challenge; we want beliefs we can confidently stand on - we want a strong foundation.

Socrates posits that knowledge is good for its own sake as do some modern 'objective list theorists'. In Plato's Philebus this is contrasted with the (hedonist) view that pleasure is the true intrinsic good.

Alternatively we might think of knowledge of having only instrumental value. In this case gaining useless knowledge is at best neutral and in reality negative since knowledge acquisition is not costless.

Weak beliefs, then, are negative.

We might be initially worried that ‘Weakly Held’ could encourage us to not be too critical of our beliefs if we can change them at will.

'Strong beliefs' are a counterweight against this: you have to act on your belief! And when you act on falsehoods generally - especially on practical matters and especially if you have Skin in the Game - you often find out pretty quickly that they were false!

Daniel Kahnemann writes that when he's working in collaboration with someone he disagrees with they both get a '15 IQ point boost' by being able to come up with better theories when challenged by a good interlocutor.

In many ways, this is what teachers hope for from their students when they exercise the Socratic method, for example in the Oxbridge supervision/tutorial method of teaching.

We previously discussed (in On Compromise) the likelihood that most knowledge problems (at least when framed correctly) are concave.

Statisticians have known this for a long time. The formal version of this argument is "statistical independence is required for the Central Limit Theorem".

The majority here may not be in pure democratic counting but there may also be a power component. That is David might conform more to his boss at work or the opinion of some academic authority than to anyone else.

One important component of good faith here is that the interlocutors actually are looking for to come to knowledge. If people feel that it would be beneficial to have a distorted view of the world then this can clearly lead to motivated reasoning.

If you Like Politics and/or Game Theory:

If the information isn't good enough to come to the truth then Condorcet's jury theorem of political science tells us that it will be worse if we add more people!

In particular, more formally, mathematician and game theorist Robert Aumann illustrated the importance of common knowledge in his Agreement Theorem (work which led up to his Econ Nobel Prize with Thomas Schelling). It states (seemingly somewhat miraculously before you look at the short and intuitive proof) that "rational agents with common priors can never rationally disagree about anything" (paraphrased)

It might be infeasible to teach a chimpanzee or a child quantum mechanics and it may be that some people have different mental strengths and are unable to grasp certain things. This seems to be somewhat possible but practically almost never the case - people are generally incredibly smart and able to grasp a lot.

There might also be non-intellectual virtues that a community might want to cultivate as well for sustainability.

For example, perhaps few people want to be in community with a troll who is sometimes right but always rude.

In the same way that virtue ethics shifts from moral acts to moral actors, virtue epistemology focuses more on knowers rather than on the semantic properties of Peter Piper’s proper propositions.

Talk about normative determinism, his name is literally a virtue!! And we all know how important it is to be earnest.

Sosa is the name of the Scarface character hmm, I mean he was a virtue theorist of sorts I guess?

IYL Epistemology you might see 'knowledge as an apt performance' as a neat solution to the Gettier problem of knowledge.

Roughly speaking, the Gettier problem is "what is the difference between knowledge and true belief if not justification?" Gettier showed that justification seems insufficient to be the bridge in his landmark paper.

Particular thanks to Christoph Kelp among others for a clear summary Sosa’s epistemology.

Note that we're not saying that you don't have reflective knowledge until you've done an adversarial collaboration, rather that you have knowledge once you could engage in an adversarial collaboration, whether you have or not.

Of course it helps that, in addition, adversarial collaborations have the property that they help you come to knowledge as well.

The name isn't desperately catchy, I'm accepting requests for a better one.

The initials ESC are fun though!